In a previous tutorial we showed how to install Openvswitch on Qemu image with Microcore Linux. At the end of tutorial we created Openvswitch extension and submitted it to Microcore public repository. Assuming that Openvswitch is configured and functional, we are ready to make three labs which helps us to test features such as VLANs, trunks and interVLAN routing.

LAB 1 Access VLAN Configuration And Testing

In this lab we are going to test connectivity between PCs which reside in same VLAN. PC1 and PC2 are assigned to VLAN 10 and PC3 and PC4 reside in VLAN 20. According to theory, each VLAN should have its own separate subnet. In our case, we are going to test not only connectivity between computers in the same VLANs but also to test connectivity between PCs placed in different VLAN.

For this reason I chose one subnet 192.168.1.0/24 for all the computers so L3 connectivity exists between e.g. PC1 (VLAN 10) and PC3 (VLAN20). Of course, the computers assigned to different VLANs are separated on Layer 2 so we do not expect any connectivity between those computers.

Picture 1 - Openvswitch with Configured VLANs on Access Ports – click image to enlarge

1) Openvswitch configuration

Login is tc without password set.

a) Let's create bridge br0

sudo ovs-vsctl add-br br0

b) Add access port eth0 to the bridge br0 and assign it to VLAN 10

sudo ovs-vsctl add-port br0 br0 eth0 tag=10

c) Assign remaining ports to the br0

sudo ovs-vsctl add-port br0 eth2 tag=10

sudo ovs-vsctl add-port br0 eth1 tag=20

sudo ovs-vsctl add-port br0 eth3 tag=20

d) Assign hostname to Microcore

sudo hostname openvswitch

echo "hostname openvswitch" >> /opt/bootlocal.sh

e) Save conf.db file to keep it persistent after the next reboot of Microcore Linux

/usr/bin/filetool.sh -b

2) PC1 configuration

Login is tc without password set.

Assign IP address 192.168.1.1/24 to eth0 and make it persistent after next reboot of Microcore

sudo hostname PC1

sudo ifconfig eth0 192.168.1.1 netmask 255.255.255.0

echo "hostname PC1" >> /opt/bootlocal.sh

echo "ifconfig eth0 192.168.1.1 netmask 255.255.255.0" >> /opt/bootlocal.sh

/usr/bin/filetool.sh -b

3) PC2 to PC4 configuration

Configure all remaining computers with correct IP paramters, similary as it was done in point 2)..

4) Access VLAN connectivity test

a) Connectivity test between PC which are residing in the same VLAN

Issue ping from PC1 (VLAN10) to PC2 (VLAN10).

tc@bPC1:~$ ping 192.168.1.2

PING 192.168.1.2 (192.168.1.2): 56 data bytes

64 bytes from 192.168.1.2: seq=0 ttl=64 time=26.666 ms

64 bytes from 192.168.1.2: seq=1 ttl=64 time=0.000 ms

64 bytes from 192.168.1.2: seq=2 ttl=64 time=3.334 ms

64 bytes from 192.168.1.2: seq=3 ttl=64 time=0.000 ms

--- 192.168.1.2 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.000/6.000/26.666 ms

tc@PC1:~$

Issue ping from PC3 (VLAN20) to PC4 (VLAN20).

tc@PC3:~$ ping 192.168.1.4

PING 192.168.1.4 (192.168.1.4): 56 data bytes

64 bytes from 192.168.1.4: seq=0 ttl=64 time=3.334 ms

64 bytes from 192.168.1.4: seq=1 ttl=64 time=3.333 ms

64 bytes from 192.168.1.4: seq=2 ttl=64 time=3.333 ms

64 bytes from 192.168.1.4: seq=3 ttl=64 time=0.000 ms

--- 192.168.1.4 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.000/1.666/3.334 ms

tc@PC3:~$

As we have expected ping should have worked in both cases.

b) Test connectivity between computers placed to different VLANs

Issue ping from PC1 (VLAN10) to PC3 (VLAN20).

tc@PC1:~$ ping 192.168.1.4

PING 192.168.1.4 (192.168.1.4): 56 data bytes

--- 192.168.1.4 ping statistics ---

11 packets transmitted, 0 packets received, 100% packet loss

We can see that ping is not working between PC1 and PC3. We confirm that there is not connectivity between computers placed in to different VLANs and openvswitch is working correctly.

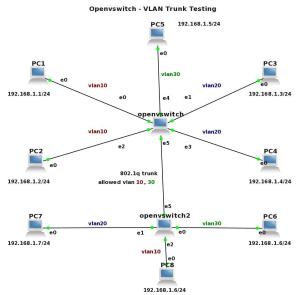

LAB 2 VLAN Trunk Configuration And Testing

In previous LAB we proved, that openvswitch only forwards traffic between computers which reside in the same VLAN. In this LAB we are going to add additional switch to the topology - openvswitch2 and connect switches with link configured as 8021.1q trunk. The access VLAN 10, 20, 30 is added to openvswitch2 and VLAN 30 is added to openvswitch. There is only VLAN 10 and 30 allowed on both sides of the trunk.

Our goal is to show that only traffic from VLAN 10 and VLAN 30 is forwarded between switches.

Picture 2 - Openvswitches Connected with a Link Configured as 8021.q Trunk – click image to enlarge

1) Openvswitch configuration

Assuming that the previous configuration of openvswitch has been stored, we only add following commands.

a) Add access port eth4 to the bridge br0 and assign it to VLAN 30

sudo ovs-vsctl add-port br0 eth4 tag=30

b) Add trunk port eth5 to bridge br0 and allow VLAN 10 and 30 on the trunk

sudo ovs-vsctl add-port br0 eth5 trunks=10,30

c) Save configuration of Microcore

/usr/bin/filetool.sh -b

2) Openvswitch2 configuration

sudo hostname openvswitch2

echo "hostname openvswitch2" >> /opt/bootlocal.sh

sudo ovs-vsctl add-br br0

sudo ovs-vsctl add-port br0 eth5 trunks=10,30

sudo ovs-vsctl add-port br0 eth0 tag=30

sudo ovs-vsctl add-port br0 eth1 tag=20

sudo ovs-vsctl add-port br0 eth2 tag=10

/usr/bin/filetool.sh -b

Similarity, configure IP parameters for the PC5, PC6 to PC8 according to the topology.

3) Trunk VLAN test

a) Issue the ping from PC1 (VLAN10) to PC8 (VLAN10)

tc@PC1:~$ ping 192.168.1.8

PING 192.168.1.8 (192.168.1.8): 56 data bytes

64 bytes from 192.168.1.8: seq=0 ttl=64 time=6.667 ms

64 bytes from 192.168.1.8: seq=1 ttl=64 time=3.333 ms

64 bytes from 192.168.1.8: seq=2 ttl=64 time=0.000 ms

64 bytes from 192.168.1.8: seq=3 ttl=64 time=3.334 ms

^C

--- 192.168.1.8 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.000/3.333/6.667 ms

tc@PC1:~$

We can see that traffic in VLAN 10 is succesfully transferred through trunk. If the same VLAN exists on two switches we call it end-to-end VLAN.

b) Issue the ping from PC5 (VLAN30) to PC6 (VLAN30)

tc@PC5:~$ ping 192.168.1.6

PING 192.168.1.6 (192.168.1.6): 56 data bytes

64 bytes from 192.168.1.6: seq=0 ttl=64 time=6.667 ms

64 bytes from 192.168.1.6: seq=1 ttl=64 time=3.334 ms

64 bytes from 192.168.1.6: seq=2 ttl=64 time=3.333 ms

64 bytes from 192.168.1.6: seq=3 ttl=64 time=3.333 ms

^C

--- 192.168.1.6 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 3.333/4.166/6.667 ms

tc@PC5:~$

From what we see, trunk is successfully carrying the traffic marked with tag 30 between switches.

c) Issue the ping from PC3 (VLAN20) to PC7 (VLAN20)

tc@PC3:~$ ping 192.168.1.7

PING 192.168.1.7 (192.168.1.7): 56 data bytes

--- 192.168.1.7 ping statistics ---

6 packets transmitted, 0 packets received, 100% packet loss

tc@PC3:~$

Traffic from vlan 20 is not forwarded between switches. It is because VLAN 20 is not allowed on the trunk. If there would be need to allow all the VLANs on the trunk, only parameter trunks (without specific VLAN) should be presented.

LAB 3 L3 VLAN interfaces, InterVLAN routing - Configuration And Testing

In this last LAB we are going to create L3 VLAN interface for VLAN 10, 20, 30. After that we will check if Microcore can forward traffic between different VLANs. We assume that particular ports were added to VLANs in previous LABs and only additional configuration will be shown in this LAB. I have also changed a topology to make it more simple and create the new IP plan for the devices.

Picture 3 - InterVLANTesting Routing Topology – click image to enlarge

1) Openvswitch configuration

a) Add an internal port vlan10 to bridge br0 as a VLAN access port for VLAN 10

sudo ovs-vsctl add-port br0 vlan10 tag=10 -- set interface vlan10 type=internal

b) Create vlan20 and vlan30 interfaces

sudo ovs-vsctl add-port br0 vlan20 tag=20 -- set interface vlan20 type=internal

sudo ovs-vsctl add-port br0 vlan30 tag=30 -- set interface vlan30 type=internal

c) Add access port eth4 and eth5 to the bridge br0 and assigned them to VLAN 30

First, We need to delete configuration for eth4 and eth5 that was done for LAB1

and LAB2.

sudo ovs-vsctl del-port br0 eth4

sudo ovs-vsctl del-port br0 eth5

Now we can assign ports to the VLAN 30.

sudo ovs-vsctl add-port br0 eth4 tag=30

sudo ovs-vsctl add-port br0 eth5 tag=30

d) Assign IP adresses to the VLAN interfaces

sudo ifconfig vlan10 192.168.10.254 netmask 255.255.255.0

sudo ifconfig vlan20 192.168.20.254 netmask 255.255.255.0

sudo ifconfig vlan30 192.168.30.254 netmask 255.255.255.0

echo "ifconfig vlan10 192.168.10.254 netmask 255.255.255.0" >> /opt/bootlocal.sh

echo "ifconfig vlan20 192.168.20.254 netmask 255.255.255.0" >> /opt/bootlocal.sh

echo "ifconfig vlan30 192.168.30.254 netmask 255.255.255.0" >> /opt/bootlocal.sh

/usr/bin/filetool.sh -b

e) Enable IPv4 and IPV6 packets forwarding between interfaces

Forwarding between network interfaces is disabled by default. To activate ipv4 and ipv6 forwarding for Microcore you need to enable it:

sudo sysctl -w net.ipv4.ip_forward=1

sudo sysctl -w net.ipv6.conf.all.forwarding=1

echo "sysctl -w net.ipv4.ip_forward=1" >> /opt/bootlocal.sh

echo "sysctl -w net.ipv6.conf.all.forwarding=1" >> /opt/bootlocal.sh

/usr/bin/filetool.sh -b

2) PC1 to PC6 - IP address, netmask, default gateway - configuration

a) Delete old IP addresses from the computers

We should split IP address space in such way when each VLAN would have assigned separate subnet. First, for each computer delete a row with old IP address from /opt/bootlocal.sh which was previously assigned in LAB1 (use dd command for delete of particular row).

b) Assign IP address and default GW to PC1 to PC6

Example for PC1:

sudo ifconfig eth0 192.168.10.1 netmask 255.255.255.0

sudo route add default gw 192.168.10.254

echo "ifconfig eth0 192.168.10.1 netmask 255.255.255.0" >> /opt/bootlocal.sh

echo "route add default gw 192.168.10.254" >> /opt/bootlocal.sh

/usr/bin/filetool.sh -b

Similiary, do it for all the PCs, according to topology and IP address plan.

3) InterVLAN routing testing

As long as PCs have had correct IP address, netmask and default gateway assigned, we can either ping PC in the same VLAN or any other vlan interface.

a) Issue ping from PC1 (VLAN10) to PC2 (VLAN10)

tc@PC1:~$ ping 192.168.10.2

PING 192.168.10.2 (192.168.10.2): 56 data bytes

64 bytes from 192.168.10.2: seq=0 ttl=64 time=3.333 ms

64 bytes from 192.168.10.2: seq=1 ttl=64 time=3.333 ms

64 bytes from 192.168.10.2: seq=2 ttl=64 time=3.334 ms

64 bytes from 192.168.10.2: seq=3 ttl=64 time=3.333 ms

--- 192.168.10.2 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 3.333/3.333/3.334 ms

tc@PC1:~$

b) Issue ping from PC1 (VLAN10) to interface vlan20

tc@PC1:~$ ping 192.168.20.254

PING 192.168.20.254 (192.168.20.254): 56 data bytes

64 bytes from 192.168.20.254: seq=0 ttl=64 time=3.333 ms

64 bytes from 192.168.20.254: seq=1 ttl=64 time=0.000 ms

64 bytes from 192.168.20.254: seq=2 ttl=64 time=0.000 ms

64 bytes from 192.168.20.254: seq=3 ttl=64 time=3.333 ms

--- 192.168.20.254 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.000/1.666/3.333 ms

tc@PC1:~$

We can see that in both cases, ping was succesfull.

c) Issue the ping from PC1 (VLAN10) to PC3 (VLAN20)

tc@PC1:~$ ping 192.168.20.1

PING 192.168.20.1 (192.168.20.1): 56 data bytes

64 bytes from 192.168.20.1: seq=0 ttl=63 time=0.000 ms

64 bytes from 192.168.20.1: seq=1 ttl=63 time=3.333 ms

64 bytes from 192.168.20.1: seq=2 ttl=63 time=3.333 ms

64 bytes from 192.168.20.1: seq=3 ttl=63 time=3.334 ms

--- 192.168.20.1 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.000/2.500/3.334 ms

tc@PC1:~$

Thanks to enabled forwarding between interfaces in kernel, ping is working between different VLANS. Microcore with Openvswitch is acting as the Layer3 switch.

End.

Thank you for your technical article. It's very useful to me. But, I have an question as follows:

I use qemu command with your image to run an "openvswitch" and "PC1" instances. But, I still cannot ping PC1 using openvswitch. In other words, I don't see any association with PC1's eth0 and openvswitch's eth0. Is there any wrong with my environment or what key point do I miss?

Thank you very much.

TeYen Liu

Hi,

do you run your lab in the GNS3 environment? If yes, what OS do you use? Remember, if Qemu is installed on Linux, you have to patch Qemu for UDP tunnels. If you use Windows and your Qemu was downloaded from GNS3 page, it should have been already patched and you need to look for problem elsewhere.

Hello,

No, I didn't know that I need to use GNS3 environment. My Qemu is installed on Ubuntu. Do I need to install VirtualBox just as same as your another article "VirtualBox 4.1.0 Installation and Configuration for GNS3 0.8.0 on Fedora 15 Linux"? Is it just OK if I patch Qemu for UDP tunnels? If possible, could you give me more information?

Thank you.

Hello,

In fact, you don't need to use GNS3 but it is much easier to create and run your topology with GNS3. Jobs such as creating clones of base Qemu image, starting/stopping Qemu VM and even configuring parameters of Qemu images, it everything is GNS3 doing for you just with few clicks of mouse.

Now, back to your question. It is absolutely necessary to patch Qemu for UDP tunnels and multicast. Without doing it no traffic will flow between interfaces. Hope it won't be big deal for you.

Hello,

I see. It is indeed very convenient to run my topology with GNS3. By the way, I cannot open a console on my qemu host with the preconfiguated terminal command as follows. It seems that my qemu host doesn't accept the telnet connection. Is anything wrong with that? I try to use ssh, but still doesn't work.

Preconfigurated terminal command:

gnome-terminal -t %d -e 'telnet %h %p' >/dev/null 2>&1 &

P.S: The qemu host image is from your Part I

Thank you for your help

Thanks Brezular for the nice tutorials about ovs!

Keep up with your work.

Hi

I am trying these test beds under Ubuntu Linux m/c.

I have a question in Lab2.

Are the two switches openswitch1 and openswitch 2 in the same m/c or on the different m/c.

I am exploring if I can create 2 openvswitches in the same box and connect them through a Trunk link.. If it is possible, I do not know how to connect these 2 switches?

With openswitch, I can created 2 switches and attached with a few 'tap' interfaces. but I could not figure out how connect these 2 switch together.?

Hi!

I think in LAB 1. the command at b) "sudo ovs-ctl add-port br0 br0 eth0 tag=10" and

c) "sudo ovs-ctl add-port br0 eth2 tag=10

sudo ovs-ctl add-port br0 eth1 tag=20

sudo ovs-ctl add-port br0 eth3 tag=20"

are not correct. there is no command "ovs-ctl" and two times br0 shows error.

After correcting that commands, I could successfully ping PC1 and PC2. But i could not ping PC3 to PC4 although they are on the same VLAN. I did exactly what you have written in the blog.

How to troubleshoot at OpenVSwitch?

Regards

Arefin

Corrected, thanks!

Hi!

I found the problem, why PC3 and PC4 could not communicate in . In my QEMU Microcore4.0.img, eth3 does not work. Is it natural?

Regards

Arefin

Hi!

I am using Qemu in GNS3 with "linux-microcore-3.4.img" and "linux-microcore-4.0-openvswitch-1.2.2-quagga-0.99.20.img" images. In both cases eth3 and eth5 show NO-CAREER and they dont work at all. Could anyone please explain?

How can I use multiple network interfaces in Qemu host to simulate servers with multiple interfaces?

Regards

Arefin

Hi, I have several question regarding to your lab.

Each PC is actually a virtual machine (a microcore image)? And they are runing on the same host machine? openvswitch is runing on a different VM? (for example, there are 5 different VM in lab1 and they are runing on the same host physical machine?)

If all the statement above are correct, how the VM runing openvswitch is aware the existen of other VMs in lab1? for example in lab1, in the command,

ovs-vsctl add-port br0 eth0 tag=10

If the VM runing openvswitch (denote it as PC0) is different from PC1-PC4 as in your figures in lab1, how VM PC0 know eth0-eth3 are connected to PC1-PC4?

by the way, if PC0 and PC1 are different,

in the comman ovs-vsctl add-port br0 eth0 tag=10, to connect PC1 to bridge br0, but usually, in PC0, eth0 may exist in PC0 if we run command in PC0, so in command , eth0 means PC0 or PC1?

Thank you very much

1/ Each PC is actually a virtual machine (a microcore image)? And they are runing on the same host machine?

YES.

2/ openvswitch is runing on a different VM? (for example, there are 5 different VM in lab1 and they are runing on the same host physical machine?)

All the VM are running on the same host machine (Fedora).

3/ If all the statement above are correct, how the VM runing openvswitch is aware the existen of other VMs in lab1?

A good question. Obviously it's not clear from the tutorial. I should have mentioned that this simulation is made by GNS3. GNS3 cares about connection - it gives commands to qemuwrapper and qemuwrapper send commands to Qemu. You know, qemu is running on host machine.

Following tutorial shows how connection between two Qemu instances are made

https://brezular.com/2011/04/02/gns3-qemu-troubleshooting/

I suggest you to install GNS3 and setup lab.

Hi, Brezular,

Had you test the openvswitch feature of QoS limitation in GNS3, for example in your lab1.

http://openvswitch.org/support/config-cookbooks/qos-rate-limiting/

using the commands,

ovs-vsctl set Interface tap0 ingress_policing_rate=1000

ovs-vsctl set Interface tap0 ingress_policing_burst=100

Can you limit the rate for vlan1 and vlan2 separately, for example, set vlan1 rate limit as 1Mbps, and vlan2 rate limit as 10Mbps?

I have test the scenario as shown in the above link, i.e. the openvswitch is in the same host as other VMs.

But if, for example in your lab1, openvswitch is in the different host from the other machine, or VMs, then it doesn't work. the rate always stays in the same value no matter how you set up rate limit using ovs-vsctl.

I don't know whether it is the problem of my configuration or openvswitch is not able to do that.

Thank you.

How did you connect the Openvswitch 1 with Openvswitch2? I am assuming they are two bridges. But how did they the eth5 on bridge1 connected to eth5 on bridge2..

Well i guess they are inter-connected as they are the instants of the same openvswitch process but please confirm.

thanks for your work!

I have a question,when I test "LAB 2 VLAN Trunk Configuration And Testing"

PC1 and PC8 could not communicate in,I used c2600 simulate PC

arp could't get other pc's MAC

I used linux-microcore-4.0-openvswitch-1.2.2.img

sudo ovs-vsctl add-br br0

sudo ovs-vsctl add-port br0 eth1 tag=10

sudo ovs-vsctl add-port br0 eth2 tag=20

sudo ovs-vsctl add-port br0 eth0 trunks=10,20

sudo ovs-vsctl add-br br0

sudo ovs-vsctl add-port br0 eth3 tag=10

sudo ovs-vsctl add-port br0 eth4 tag=20

sudo ovs-vsctl add-port br0 eth0 trunks=10,20

Which Ethernet card do you use?

Awesome tutorial, literally banged my head every where, and finally saw your article somewhere and dug up gold. I have a network setup in mininet, and your tutorial works like a charm in there.

Your comment is awaiting moderation.

hi,

kindly i want to let openvswitch work in layer3 in gns3.

what are the setps to configure it.

under ryu controller

thanks

Hello

I have openvswitch running in gns3 but subinterfaces created using the following

command ip link add link eth0 name eth0.5 type vlan id 5 do not survive a reboot of the openvswitch.

Please what would you suggest i do

Option1 - Put these command to the startup script (e.g. /etc/rc.local) based on your Linux distribution.

Option2 - put the the command to /etc/network/interfaces as following and then restart networking service or just reboot.

auto eth0

iface eth0 inet static

address 192.168.1.1

mask 255.255.0.0

pre-up ip link add link eth0 name eth0.5 type vlan id 5

Hello Brezula.

Please the second option has worked excellently. Thanks so much.

Please having set the vlans and their interfaces correctly I can now ping from R1(192.168.10.1) connected to OVS1(192.168.10.2) on eth1 and from R2(192.168.20.1) to OVS2(192.168.20.2) on eth1 however i cannot ping from R1 to R2. I have configured the links eth2 from OVS1 to OVS2 as a trunk link for both using ovs-vsctl set port eth2 trunks=10,20 on OVS1 and OVS2. Please can you tell me what I am missing?

Hello Brezula. Thanks so much Option2 worked perfectly.

R1(192.168.10.2) connected is to OVS1(192.168.10.1) and both can reach each other. R2(192.168.20.2) can also reach OVS2(192.168.20.1) and vice-versa. However, R1 cannot reach OVS2 and R2 cannot reach OVS1. I have created a trunk port on eth2 of both OVS1 and OVS2 using ovs-vsctl set port eth2 trunks=10,20. Can you please show me what I am missing?

Hello Brezula. Thanks so much Option2 worked perfectly.

R1(192.168.10.2) connected is to OVS1(192.168.10.1) and both can reach each other. R2(192.168.20.2) can also reach OVS2(192.168.20.1) and vice-versa. However, R1 cannot reach OVS2 and R2 cannot reach OVS1. I have created a trunk port on eth2 of both OVS1 and OVS2 using ovs-vsctl set port eth2 trunks=10,20. Can you please show me what I am missing?

Hello Brezula,

Please option 2 worked.

I am running R1(192.168.10.1) connected to OVS1(192.168.10.2) using vlan10

I am also running R2(192.168.20.1) connected to OVS2(192.168.20.2) using vlan20

I have configured both eth2 ports on OVS1 and OVS2 as trunk ports but I cannot ping between R1 and R2. Is there any thing I am missing?

can we implement this scenario using ovs+dpdk?

Sure. I'll add it to my TODO list.